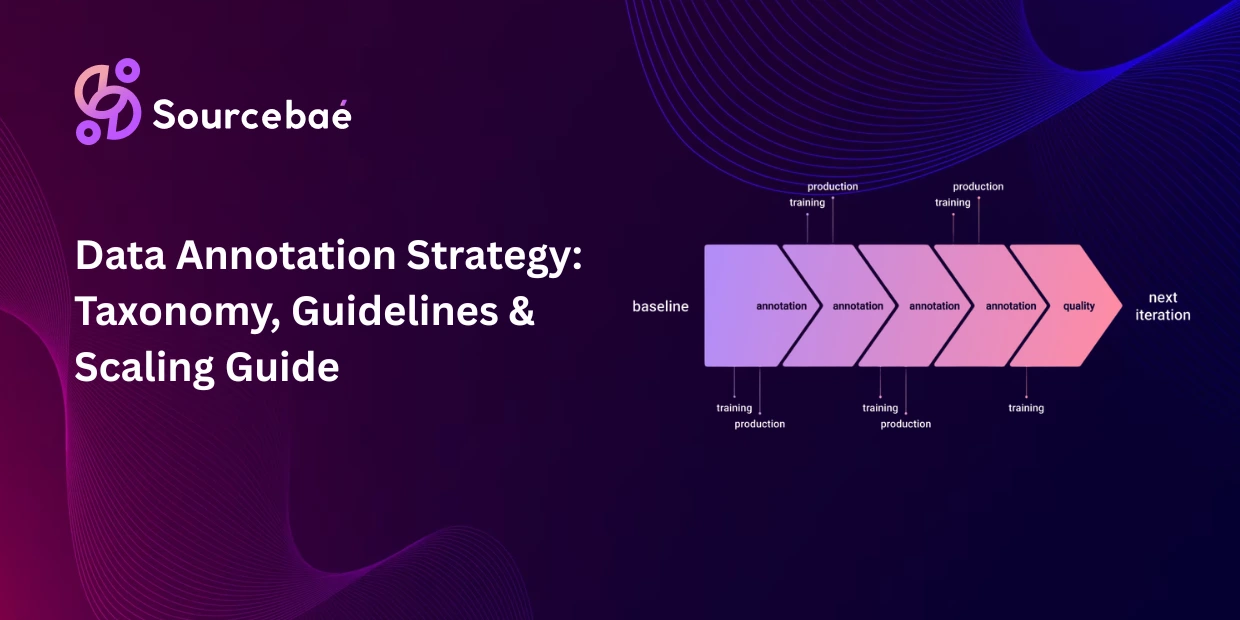

A data annotation strategy is the end-to-end plan that governs how an organization produces, manages, and iterates on the labeled data that trains its AI systems. In 2026, annotation is no longer a one-off preprocessing step buried in the early stages of a project it is a continuous operational function that runs in parallel with model training and deployment, feeding back errors, edge cases, and distributional shifts into an evolving training dataset. Organizations that treat annotation as a strategic capability rather than a tactical task consistently build better models, faster.

A 2025 MIT study found that models trained on poorly annotated data experienced up to 40% degradation in accuracy compared to those trained on expert-reviewed datasets. ML teams report spending over 80% of their time improving training data quality rather than tuning model architectures. The data annotation market itself is projected to reach $19.92 billion by 2033 at a 27.47% CAGR a trajectory that reflects how seriously the industry takes the infrastructure that produces training data. Yet many teams still begin annotation without a coherent strategy, jumping into labeling before defining their taxonomy, writing guidelines, or planning how they will scale.

This post provides a step-by-step framework for building an annotation strategy from the ground up from taxonomy design and guideline creation through annotator recruitment, calibration, pilot execution, production scaling, and continuous iteration. Each section is designed to be actionable, whether you are building your first annotation pipeline or restructuring an existing one. (For the foundational annotation concepts that this strategy builds upon, see our [comprehensive guide to data annotation Pillar Page].)

Step 1: Defining Your Annotation Taxonomy

Annotation taxonomy design is the foundational decision that shapes every downstream aspect of your annotation workflow. The taxonomy defines what labels exist, how they relate to each other, and what distinctions the model will be able to learn. Get the taxonomy wrong, and no amount of annotator training or QA will fix it.

The MECE principle

Effective taxonomies follow the “mutually exclusive and collectively exhaustive” (MECE) principle. Mutually exclusive means each data point maps to exactly one label within any given category there should be no ambiguity about which label applies. Collectively exhaustive means the label set covers all possible cases the model will encounter there should be no real-world input that falls outside the taxonomy. In practice, perfect MECE is aspirational; the goal is to get close enough that edge cases are the exception, not the norm.

Granularity trade-offs

Every taxonomy involves a granularity decision: how specific should the labels be? A coarse taxonomy (“vehicle”) is easier to annotate consistently but limits what the model can learn. A fine-grained taxonomy (“sedan,” “hatchback,” “SUV,” “pickup truck,” “van,” “motorcycle”) enables richer model capabilities but increases annotator cognitive load and inter-annotator disagreement. The right granularity depends on the downstream task. An object detection model that needs to distinguish vehicle types requires fine-grained labels; a traffic counting model may only need “vehicle” versus “pedestrian.”

Hierarchical structure

Complex taxonomies benefit from hierarchical organization: broad categories at the top level, with increasingly specific subcategories beneath. “Vehicle → Passenger Vehicle → Sedan” allows annotators to work at the appropriate level for their task and enables models to learn at multiple granularity levels. Hierarchical taxonomies also simplify taxonomy updates adding a new subcategory does not invalidate existing annotations at the parent level.

Mapping labels to downstream actions

Before finalizing the taxonomy, map each label to the model’s intended downstream behavior. If a label exists in the taxonomy but the model never needs to distinguish it from an adjacent label, the distinction adds annotation cost without model benefit. Conversely, if the model needs to make a distinction that the taxonomy does not capture, the training data cannot teach it. This alignment check taxonomy maps to model requirements maps to real-world deployment behavior is the most important validation step in taxonomy design.

Version control from day one

Taxonomies evolve. New categories emerge, existing categories split or merge, definitions are refined. Every taxonomy change must be versioned, documented, and propagated to active annotation guidelines. Un-versioned taxonomy changes are a leading cause of label inconsistency in production annotation pipelines. (For how taxonomy design connects to project-level planning, see our post on [annotation project management Post 5].)

Step 2: Writing Effective Annotation Guidelines

Knowing how to create annotation guidelines that annotators can follow consistently is arguably the highest-leverage skill in annotation operations. Guidelines are the bridge between the taxonomy (what labels exist) and the annotated dataset (what labels get applied). Every ambiguity in the guidelines becomes noise in the data.

Structure of effective guidelines

A production-grade annotation guidelines template includes the following components:

Task definition

a clear, one-paragraph statement of what the annotator is doing and why. This section answers the question “What am I labeling and what will the labels be used for?” so annotators understand the purpose behind their work.

Label definitions

a precise, unambiguous definition for every label in the taxonomy. Each definition should specify what the label means, what boundary conditions separate it from adjacent labels, and any special rules (e.g., “Label ‘partially occluded’ if more than 50% of the object is visible; label ‘heavily occluded’ if less than 50% is visible”).

Positive examples

at least 2–3 correctly labeled examples for every label, showing annotators exactly what a correct annotation looks like. These examples should include both easy cases (obvious instances) and borderline cases (instances near the label boundary).

Negative examples

at least 1–2 examples for every label showing what the label does not include. Negative examples are particularly important for labels that are frequently confused. “This is NOT a sedan note the raised suspension and cargo bed, which make it a pickup truck.”

Edge case protocols

explicit instructions for scenarios that the main definitions do not clearly resolve. “If the object is too blurry to identify, label it as ‘uncertain’ rather than guessing.” “If a bounding box would overlap with another object, prioritize the foreground object.” These protocols prevent annotators from improvising different solutions to the same ambiguity.

Annotation tool instructions

step-by-step visual instructions showing how to use the specific annotation tool for this task. Screenshots, keyboard shortcuts, and workflow sequences reduce tool-related errors.

The single most common mistake

Guidelines that define what labels mean but do not show how they apply to ambiguous real-world examples. Annotators who understand the definition of “aggressive driving” in the abstract will still disagree on specific video clips unless the guidelines include annotated examples of borderline cases with the reasoning explained.

Step 3: Annotator Recruitment and Training

Annotator training best practices have evolved significantly as the annotation industry has matured. The 2026 consensus is that annotator quality is the single largest determinant of dataset quality, and that investing in recruitment and training pays returns throughout the project lifecycle.

Matching expertise to task complexity

Not every annotation task requires domain experts, and not every task can be done by generalists. The matching framework is straightforward: routine tasks with clear, unambiguous labels (object detection with well-defined categories, simple text classification) can be performed by trained generalists. Tasks requiring domain knowledge (medical imaging, legal document classification, financial compliance tagging) require domain specialists.

Tasks requiring subjective judgment at the expert level (RLHF preference ranking, content moderation for nuanced policy, aesthetic quality assessment) require carefully calibrated expert annotators. Mismatching annotator expertise to task complexity either by using generalists for expert tasks or by overpaying experts for routine work is one of the most common resource allocation errors in annotation operations.

Qualification testing

Before any annotator works on production data, they should pass a qualification test using gold-standard examples (pre-labeled data with known correct answers). Industry standard practice is to require 85–90% accuracy on qualification tests, though the threshold should be calibrated to the task’s difficulty and the consequence of errors. Annotators who fall below the threshold receive additional training and re-test; those who remain below threshold are not assigned to the project.

Onboarding training

Effective onboarding includes a walkthrough of the guidelines with live discussion of edge cases, hands-on practice with the annotation tool on sample data (not production data), a supervised trial period where the annotator’s work is reviewed at 100% before they move to production, and a feedback session addressing any errors or misunderstandings from the trial.

Ongoing performance monitoring

Qualification testing is a one-time gate; ongoing monitoring is the continuous check. Gold-standard examples embedded in the production queue (where annotators do not know which items are test cases) provide real-time accuracy measurement. Annotator-specific dashboards tracking accuracy, speed, and agreement with peers enable early detection of drift, fatigue, or systematic misunderstandings. (For how project-level workforce planning scales these practices, see our post on [scaling annotation operations Post 6].)

Step 4: Calibration Rounds and Pilot Execution

The pilot phase is where strategy meets reality. Before scaling to production, every annotation project should run a structured pilot that tests the taxonomy, validates the guidelines, calibrates the annotators, and establishes quality baselines.

Calibration rounds. A calibration round is a structured session where all annotators label the same set of data points independently, then compare and discuss their results. The purposes are to identify labels where annotators disagree (signaling guideline ambiguity), to align annotators’ interpretations of borderline cases, and to measure initial inter-annotator agreement (IAA) as a baseline.

IAA targets for pilot. The pilot should achieve minimum IAA thresholds before proceeding to production. For classification tasks, Cohen’s Kappa of 0.75 or higher is a reasonable target. For segmentation tasks, a Dice coefficient of 0.80+ on well-defined structures signals readiness. If the pilot IAA falls below these thresholds, the problem is almost always in the guidelines (ambiguous definitions, missing edge case protocols) rather than in the annotators revise the guidelines before adding more training.

The pilot-to-production gate. A pilot should include at least 200–500 annotated examples (enough to calculate meaningful IAA metrics), at least two independent annotators per example (to measure agreement), at least one adjudication round (where disagreements are reviewed by a senior annotator who provides the definitive label), and a post-pilot guideline revision (incorporating lessons from the calibration rounds). Only after the pilot demonstrates acceptable IAA and the guidelines have been revised to address discovered ambiguities should the project proceed to production.

Step 5: Scaling from Pilot to Production

Scaling data annotation from pilot to production is where many annotation projects fail not because the methodology is wrong, but because the operational infrastructure that supports consistent quality at volume was not designed for scale.

What changes at scale

In a pilot, a small team of carefully calibrated annotators labels a few hundred examples under close supervision. In production, a larger team (potentially across multiple shifts, time zones, and sites) labels thousands or millions of examples with less individual supervision. The challenges that emerge at scale include quality drift (annotators’ labels gradually diverge from the calibrated standard as time passes and attention wanders), onboarding bottleneck (new annotators joining mid-project must be brought to the same calibration level as the original team), guideline evolution (new edge cases emerge at scale that the pilot did not anticipate, requiring guideline updates that must propagate to the entire team), and throughput pressure (deadlines and volume targets can incentivize speed over accuracy if the incentive structure is not carefully designed).

Operational infrastructure for scale

Production annotation requires several infrastructure elements that pilots do not. Automated quality monitoring uses embedded gold-standard examples to continuously measure annotator accuracy without manual auditing. Tiered review routes all annotations through an initial annotator, with a sample reviewed by a senior annotator, and disagreements escalated to an adjudicator. Versioned guideline management ensures that all annotators are working from the same guideline version and that changes are communicated, trained, and verified. Performance dashboards give project managers real-time visibility into throughput, accuracy, agreement, and annotator-level metrics. Feedback loops connect downstream model performance back to annotation quality if the model underperforms on a specific category, the annotation team investigates whether label quality for that category is the root cause.

The hybrid workforce model

In 2026, the prevailing data annotation workflow design for production combines in-house annotators (who handle complex, domain-specific, or sensitive tasks) with outsourced or crowdsourced annotators (who handle volume-intensive, well-defined tasks) and AI-assisted pre-labeling (which generates initial labels that human annotators correct). This hybrid approach allocates each labor type to the tasks where it delivers the most value. (For how AI-assisted pre-labeling integrates into this workflow, see our post on [AI-assisted annotation Post 24].)

Step 6: Feedback Loops and Continuous Iteration

The annotation process does not end when the dataset is delivered. In 2026, the most effective annotation strategies treat data production as a continuous cycle rather than a batch process.

Model-to-annotation feedback

When the trained model is evaluated, its errors reveal gaps in the training data. A model that systematically misclassifies a specific category may be suffering from inconsistent labels, under-representation, or ambiguous guidelines for that category. Feeding model error analysis back into the annotation pipeline revising guidelines, re-annotating problematic subsets, collecting additional examples for underperforming categories is the mechanism that turns a static dataset into a continuously improving one.

Production-to-annotation feedback

When the deployed model encounters real-world inputs that differ from the training distribution, those inputs become the highest-value annotation targets. Uncertainty-driven routing (flagging inputs where the model’s confidence is low) creates a stream of production-relevant data that, once annotated, addresses the specific gaps the model is experiencing in deployment.

Guideline evolution

Every feedback cycle should include a guideline review: are the definitions still appropriate? Have new edge cases emerged that the guidelines do not address? Has the taxonomy evolved (new categories needed, existing categories split or merged)? Guidelines should be living documents with version histories, change logs, and training updates for annotators affected by changes.

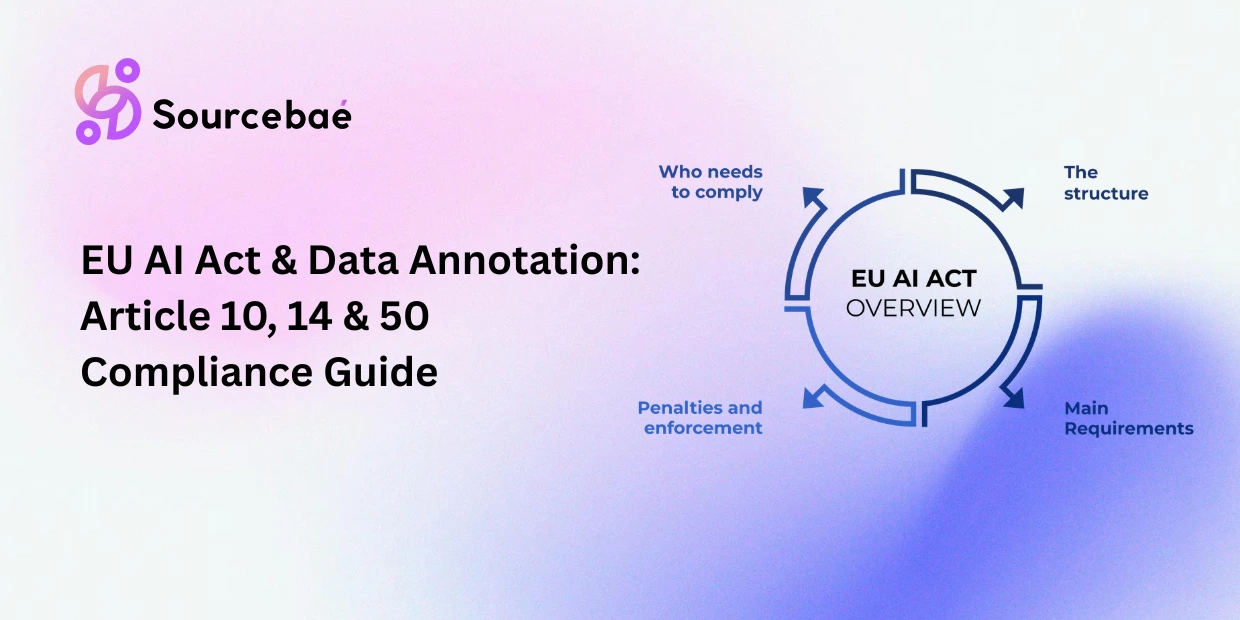

Measuring improvement

The feedback loop is only useful if improvement is measured. Track model accuracy on held-out test sets after each annotation iteration, IAA trends over time (agreement should remain stable or improve as guidelines mature), the rate at which new edge cases are discovered (a decreasing rate signals that the guidelines are converging toward completeness), and the volume of production-flagged data over time (a decreasing rate signals that the model is becoming more capable in deployment). (For the regulatory requirements that govern how these feedback loops must be documented, see our post on [EU AI Act annotation compliance Post 28].)

Step 7: Choosing Methodologies The Decision Framework

Not every project needs the same annotation approach. The final strategic decision is matching the right methodology to the right task.

Fully manual annotation

is appropriate when the task requires subjective judgment, domain expertise, or regulatory-mandated human oversight. Medical imaging, content moderation, RLHF preference ranking, and legal document classification are typical use cases.

AI-assisted annotation

is appropriate when a pretrained model can generate useful pre-labels that human annotators correct. Object detection, text classification, entity recognition, and segmentation tasks with well-defined categories are natural fits. (See our post on [AI-assisted annotation Post 24].)

Programmatic weak supervision

is appropriate when domain experts can express labeling logic as rules or heuristics and the volume of unlabeled data is large. Text classification at scale, document routing, and entity extraction are common use cases. (See our post on [weak supervision Post 20 via Pillar Page].)

LLM-as-annotator

is appropriate when the task aligns well with LLM capabilities (text classification, sentiment analysis, entity recognition) and the team has validated LLM accuracy against a gold standard. (See our post on [LLM-as-annotator Post 21 via Pillar Page].)

Hybrid pipelines

combining two or more methodologies are the most common production configuration in 2026. A typical hybrid uses AI-assisted pre-labeling for the bulk of the data, active learning to select the most valuable examples for human review, and expert manual annotation for edge cases and quality validation.

(For the complete methodology taxonomy and decision flowchart, see our [Pillar Page].)

Downloadable Annotation Strategy Checklist

Use this checklist as a planning tool when starting a new annotation project or auditing an existing one.

Taxonomy:

Labels defined with MECE principles. Granularity matched to downstream model requirements. Hierarchical structure documented. Labels mapped to intended model behavior. Version control established.

Guidelines:

Task definition written. Every label defined with boundary conditions. Positive and negative examples provided for each label. Edge case protocols documented. Tool-specific instructions included. Guidelines reviewed and approved by project lead and domain expert.

Workforce:

Annotator expertise matched to task complexity. Qualification tests designed with gold-standard examples and passing thresholds. Onboarding training plan including supervised trial period. Ongoing performance monitoring configured with embedded gold standards.

Pilot:

200–500 examples annotated by 2+ annotators. IAA measured and compared to targets. Calibration round conducted with disagreement discussion. Guidelines revised based on pilot findings. Pilot-to-production gate criteria documented and met.

Production:

Automated quality monitoring active. Tiered review workflow configured. Versioned guideline management in place. Performance dashboards operational. Feedback loops connecting model performance to annotation quality.

Iteration:

Model error analysis feeding back to annotation priorities. Production-flagged data routed for annotation. Guidelines treated as living documents with version histories. Improvement metrics tracked across iterations.

Frequently Asked Questions

What is a data annotation strategy?

A data annotation strategy is the end-to-end plan governing how an organization produces, manages, and iterates on labeled training data covering taxonomy design, guideline creation, workforce planning, quality assurance, scaling, and continuous feedback loops.

How do you design an annotation taxonomy?

Apply the MECE principle (mutually exclusive, collectively exhaustive), choose granularity based on downstream model requirements, organize labels hierarchically for complex domains, map every label to intended model behavior, and establish version control from day one.

How do you create annotation guidelines?

Effective guidelines include a task definition, precise label definitions with boundary conditions, positive and negative examples for every label, edge case protocols, and tool-specific instructions. The key is showing how labels apply to ambiguous real-world examples, not just defining them abstractly.

What are annotator training best practices?

Match expertise to task complexity, require qualification tests with gold-standard examples (85–90% threshold), provide structured onboarding with supervised trial periods, and monitor ongoing performance through embedded gold standards and annotator-level dashboards.

How do you scale annotation from pilot to production?

Build operational infrastructure for automated quality monitoring, tiered review workflows, versioned guideline management, performance dashboards, and feedback loops. Use hybrid workforce models combining in-house experts, outsourced annotators, and AI-assisted pre-labeling.

What is annotation workflow design?

Annotation workflow design is the process of structuring how data flows through the labeling pipeline from raw data intake through pre-labeling, human annotation, review, adjudication, quality measurement, and export with each step configured for the project’s specific quality, throughput, and compliance requirements.