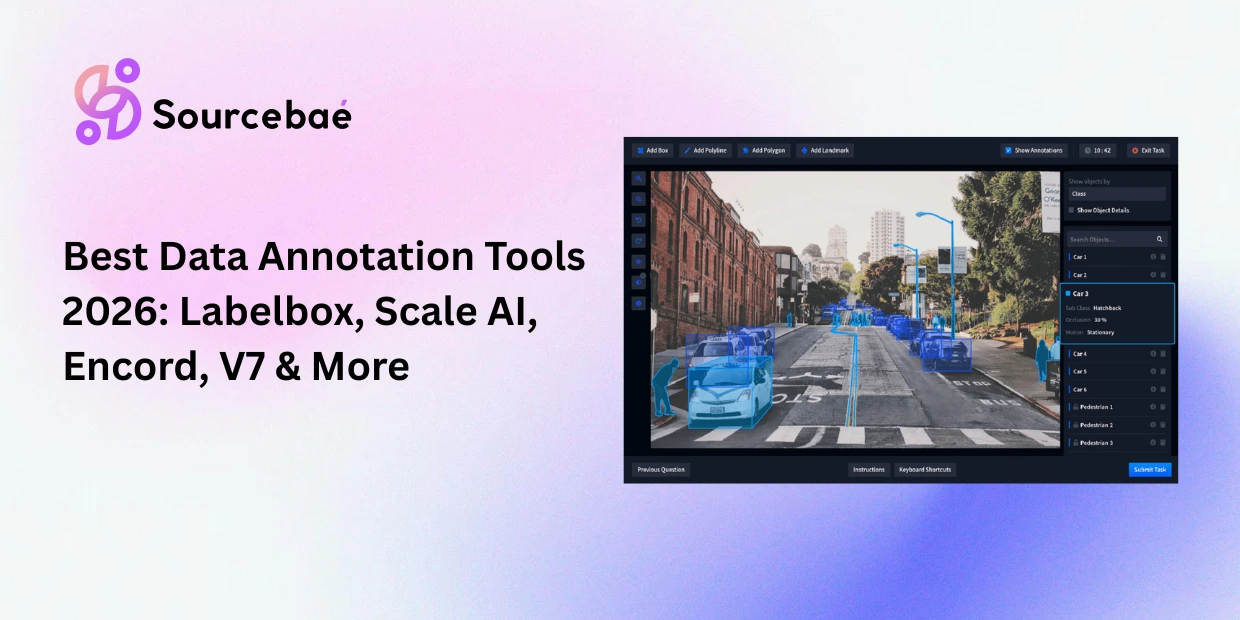

Choosing the right data annotation tools is one of the highest-leverage decisions an ML team makes. The platform you select determines your annotation speed, data quality ceiling, workflow flexibility, and long-term scalability and switching costs are substantial once a team has built processes around a specific tool. In 2026, the annotation platform market has matured beyond basic labeling utilities into full data operations infrastructure: the best data annotation tools now combine annotation editors, AI-assisted pre-labeling, active learning, quality assurance pipelines, MLOps integration, workforce management, and compliance controls in unified platforms.

This data annotation tools comparison evaluates the five platforms that enterprise ML teams most frequently evaluate in 2026 Labelbox, Scale AI, Encord, V7 (Darwin), and SuperAnnotate across the dimensions that actually determine fit: modality coverage, AI-assisted features, quality assurance depth, MLOps integration, security posture, pricing models, and ideal use cases. The market context matters: Scale AI projects approximately $2 billion in 2025 revenue and completed Meta’s ~$14.3 billion investment for a 49% stake, signaling the strategic importance of annotation infrastructure. Encord raised a $60 million Series C, positioning itself as the enterprise challenger in regulated industries. Labelbox, V7, and SuperAnnotate each occupy distinct niches that make direct comparison essential for teams evaluating options.

This guide is structured so you can read the full comparison or jump directly to the section most relevant to your use case. We update this post quarterly to reflect platform changes. (For foundational context on annotation methodologies, see our [comprehensive guide to data annotation Pillar Page].)

Evaluation Criteria: What Actually Matters

Before comparing platforms, it helps to establish the criteria that drive real-world selection decisions. Based on how enterprise teams evaluate data labeling platform comparison options, the following dimensions carry the most weight:

Modality coverage

It determines whether the platform supports your current and anticipated data types images, video, text, audio, 3D point clouds, LiDAR, DICOM, geospatial, and documents. A platform that handles 80% of your needs forces you to maintain a second tool for the remainder.

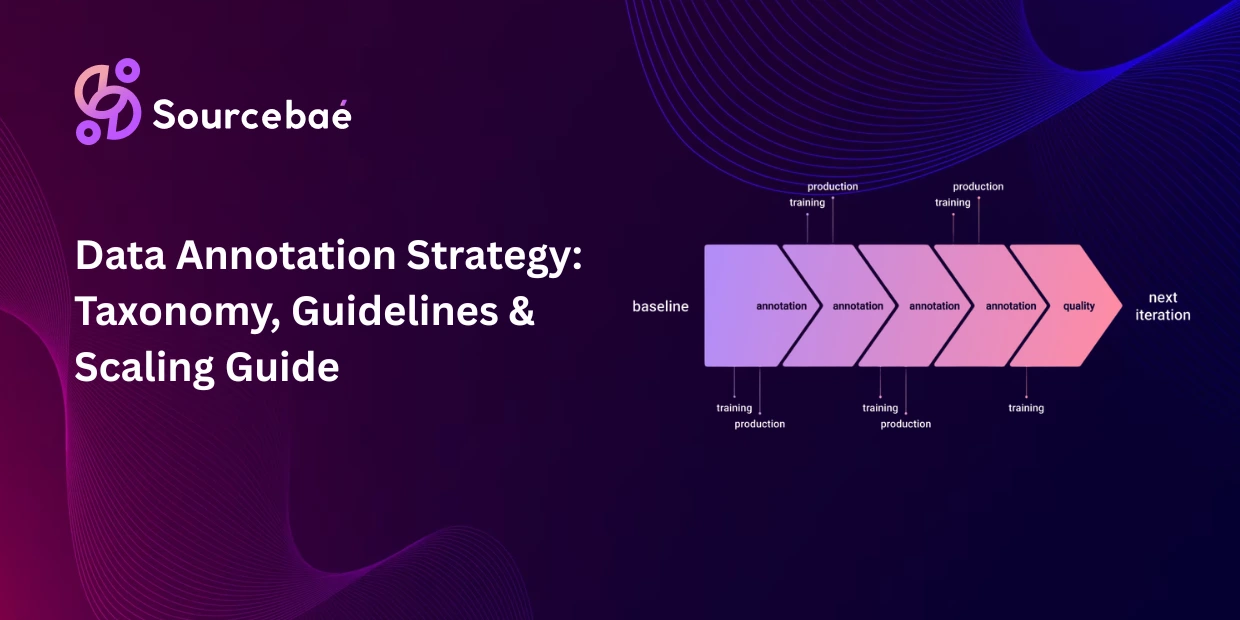

AI-assisted features

It determine productivity. Pre-labeling (model-assisted labeling), active learning integration, SAM/SAM 2 support, and LLM-assisted text annotation are now table-stakes for competitive platforms. The differentiator is how deeply these features integrate into the annotation workflow versus being bolted on.

Quality assurance depth

It determines whether you can trust your output. Consensus workflows, multi-level review stages, inter-annotator agreement metrics, gold-standard benchmarking, and audit trails separate production-grade platforms from prototyping tools.

MLOps integration

It determines whether the platform fits into your pipeline. API quality, SDK availability, export format support (COCO, YOLO, Pascal VOC, TFRecords, custom JSON), and native integrations with training frameworks and cloud storage providers matter for operational teams.

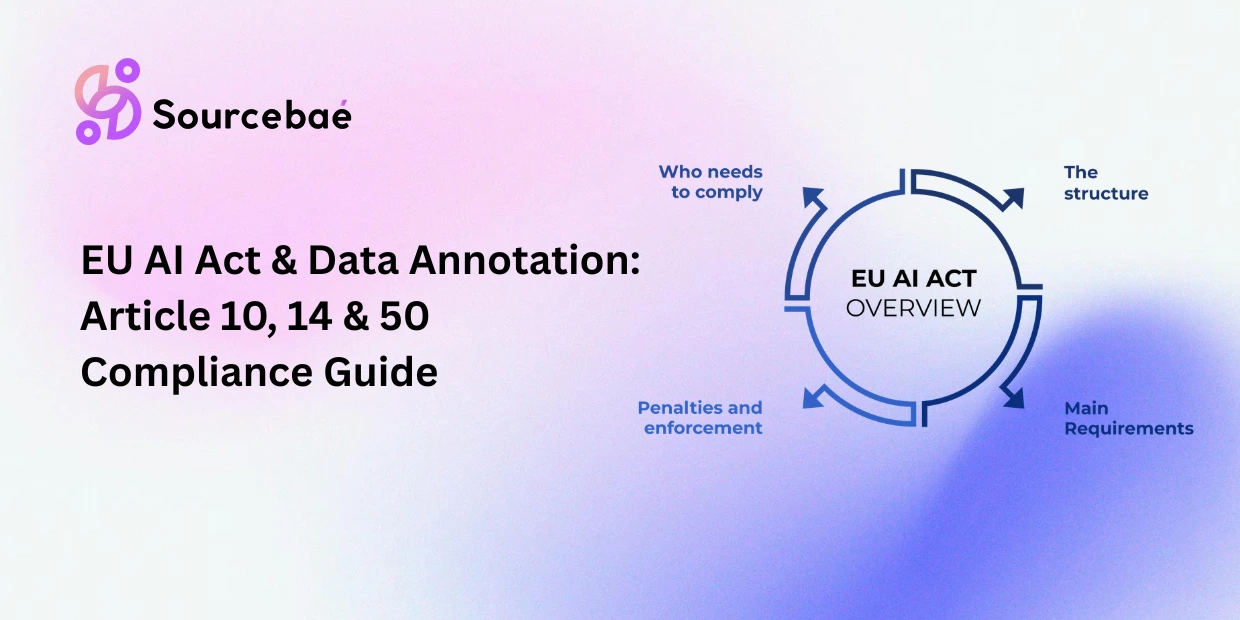

Security and compliance

It determine whether you can use the platform for sensitive data. SOC 2, HIPAA, ISO 27001 certifications, role-based access control, data encryption, deployment options (cloud, on-premise, hybrid), and audit logging are essential for healthcare, financial services, government, and defense projects.

Pricing model

It determines total cost of ownership. Some platforms charge per user, others per data unit, others by custom enterprise agreement. Hidden costs include storage, compute for AI-assisted features, and workforce management surcharges.

Head-to-Head Comparison Table

| Dimension | Labelbox | Scale AI | Encord | V7 (Darwin) | SuperAnnotate |

| Primary model | Platform | Platform + managed workforce | Platform | Platform | Platform + workforce option |

| Image annotation | Bounding box, polygon, segmentation, keypoint | Full suite via managed service | Full suite + SAM 2 integration | Full suite + auto-annotation | Full suite + vector tools |

| Video annotation | Frame-level + interpolation | Managed service | Frame-level + object tracking | Strong video suite + tracking | Frame-level + interpolation |

| Text/NLP | Classification, NER, relations | Via managed NLP teams | Document annotation, classification | Limited NLP support | Classification, NER |

| 3D/LiDAR | Point cloud support | Strong (AV focus) | Point cloud, sensor fusion | Limited | 3D cuboids, point clouds |

| Medical (DICOM) | Limited | Via managed service | Native DICOM/NIfTI support | Native DICOM/NIfTI | Limited |

| Geospatial | Geospatial data types | Satellite/aerial via service | Limited | Limited | Limited |

| AI-assisted labeling | Model-Assisted Labeling (MAL) | Internal AI + human review | SAM 2, GPT-4o, micro-models | Auto-annotation, model training | ML-assisted auto-labeling |

| Active learning | Supported | Internal prioritization | Native active learning + curation | Supported | Supported |

| QA workflows | Consensus, benchmarking | Multi-stage human QA | Multi-level review, audit trails | Review stages, consensus | Multi-level review, QA dashboard |

| MLOps integration | Extensive API/SDK, cloud connectors | API, custom integrations | API/SDK, cloud connectors | API, dataset versioning | API, cloud integrations |

| Security | SOC 2, ISO 27001, GDPR | Enterprise security, FedRAMP | SOC 2, HIPAA, on-premise option | SOC 2, GDPR | SOC 2, US/EU cloud hosting |

| Pricing | Free tier; Pro ~$500/mo; Enterprise custom | Custom enterprise/project pricing | Custom enterprise pricing | Per-user; custom enterprise | Starts ~$62/user/mo; Enterprise custom |

| Best for | Enterprise ML pipelines, multi-modality | Large-scale AV, LLM training, government | Healthcare, regulated industries, multimodal | Healthcare, video, research | CV teams needing flexible QA |

(For the image annotation techniques referenced in this table bounding boxes, polygons, segmentation see our post on [image annotation methods Post 7]. For text/NLP annotation methods, see our post on [NLP annotation Post 8].)

Labelbox: The Enterprise Workflow Engine

Labelbox has established itself as the go-to platform for enterprise teams that need a comprehensive, multi-modality annotation environment tightly integrated with their ML pipeline. Gartner ranks it among the top data labeling solutions for organizations with complex annotation workflows.

Core strength: Model-Assisted Labeling (MAL)

Labelbox’s signature feature allows teams to connect their own models to the platform, generate pre-labels on unlabeled data, and have annotators refine those predictions. This creates a virtuous cycle: the model pre-labels, humans correct, the corrected data improves the model, and the next batch of pre-labels is more accurate. MAL supports custom model integration via API, making it model-agnostic rather than locked to a specific pre-labeling architecture.

Workflow management

Labelbox excels at orchestrating complex annotation projects across distributed teams. Task assignment, progress tracking, annotator performance analytics, and configurable review stages give project managers visibility and control. Consensus workflows (where multiple annotators label the same data point and disagreements are flagged) and benchmarking (where annotators are periodically tested against gold-standard examples) provide built-in quality assurance.

Modality coverage

The platform supports images, video, text, geospatial data, conversational data, and documents. This breadth makes it a viable single-platform choice for teams working across multiple data types. In mid-2025, Labelbox released Evaluation Studio specifically for LLM evaluation and real-time feedback workflows.

Limitations

G2 reviewers consistently note a steeper learning curve for new users and complexity in initial setup. Custom enterprise pricing means costs can escalate quickly at scale without careful negotiation. NLP-specific features, while present, are less deep than dedicated text annotation tools. (For how Labelbox’s MAL feature connects to the broader AI-assisted annotation paradigm, see our post on [AI-assisted annotation Post 24].)

Best for: Large enterprise teams running multi-modality annotation pipelines with existing ML models they want to integrate for pre-labeling. Teams that value workflow management depth and analytics.

Scale AI: The Managed Service Powerhouse

Scale AI operates a fundamentally different model from the other platforms in this comparison. While Labelbox, Encord, V7, and SuperAnnotate are primarily software platforms (you bring your own annotators), Scale AI combines a platform with a massive managed workforce of trained annotators. This Labelbox vs Scale AI distinction is the most important architectural difference in the comparison.

Core strength: Managed annotation at scale.

Scale AI handles the entire annotation process: you provide data and specifications, Scale AI delivers labeled datasets. This end-to-end model is especially powerful for organizations that need high-volume annotation without building an internal labeling team. Scale AI’s workforce has deep experience in autonomous vehicle perception data, LLM alignment (RLHF), and government/defense applications.

Market position

Scale AI’s trajectory reflects the strategic importance of annotation infrastructure: revenue reached approximately $870 million in 2024 and was tracking toward $2 billion in 2025. Meta’s ~$14.3 billion investment for a 49% stake in 2024 was one of the largest AI infrastructure deals of the year. This investment both validated Scale AI’s market position and raised questions among some enterprise clients about data governance and vendor neutrality under Meta’s partial ownership.

LLM alignment specialization

Scale AI has become the default provider for many frontier AI labs’ RLHF and alignment annotation needs. Their workforce is trained for preference ranking, factuality evaluation, and safety red-teaming the specialized annotation tasks that LLM development requires.

Limitations

The managed service model means less direct control over the annotation process compared to platform-only tools. Pricing is custom and enterprise-grade not accessible for smaller teams or research budgets. The Meta investment has prompted some organizations, particularly in government and competitive AI development, to diversify their annotation vendors.

Best for: Organizations needing high-volume, production-grade annotation without building internal teams. Autonomous vehicle companies, frontier AI labs doing RLHF, and government agencies with large-scale perception data needs.

Encord: The Regulated Industry Specialist

Encord vs Labelbox is a comparison that comes down to domain focus. Where Labelbox optimizes for breadth and workflow management, Encord optimizes for depth in regulated industries particularly healthcare, life sciences, and autonomous systems where compliance, data governance, and domain-specific tooling are non-negotiable.

Core strength: Medical imaging and compliance

Encord provides native support for DICOM and NIfTI medical imaging formats, HIPAA and SOC 2 compliance, and on-premise deployment options for organizations that cannot send data to external clouds. This combination makes it the default evaluation candidate for healthcare AI teams. Encord also integrates foundation models like SAM 2 and GPT-4o directly into annotation workflows for AI-assisted pre-labeling.

Active learning and data curation.

Encord differentiates by tightly coupling annotation with data curation and model evaluation. Its Index product helps teams identify the most valuable data to annotate finding edge cases, detecting data quality issues, and surfacing underrepresented categories before annotation begins. This “curate then annotate” approach can significantly reduce wasted annotation effort.

Multimodal coverage

The platform supports images, video, text, documents, audio, and medical imaging within a single workspace. For teams working across modalities in regulated environments, this consolidation reduces tool sprawl and simplifies compliance management.

Limitations

Some G2 reviewers note navigation friction and occasional latency, particularly on large datasets. The platform’s depth in regulated industries means some features are optimized for compliance workflows at the expense of simplicity for less regulated use cases. Enterprise pricing lacks transparency for initial evaluation.

Best for: Healthcare AI teams, autonomous systems teams working with sensor fusion data, and any organization in regulated industries where HIPAA, SOC 2, and data sovereignty are requirements. (For how DICOM annotation workflows connect to medical AI development, see our post on [medical imaging annotation Post 23].)

V7 (Darwin): The Research and Healthcare Hybrid

V7 Labs annotation through its Darwin platform targets the intersection of research agility and production quality, with particular depth in healthcare and life sciences applications.

Core strength

Auto-annotation and model training integration. V7’s differentiator is tight integration between annotation and model training. Teams can train models directly within the platform on their annotated data, then use those models for auto-annotation on subsequent batches. This closed-loop approach makes V7 particularly appealing for research teams that iterate rapidly between labeling and modeling.

Medical imaging depth

V7 provides native DICOM and NIfTI support with specialized tools for radiology and pathology annotation. The platform has built a reputation in the life sciences vertical, where its combination of auto-annotation and medical format support addresses both the speed and domain-specificity requirements.

Dataset versioning

V7 includes built-in dataset versioning tracking changes to annotations over time and enabling rollback to previous versions. For research teams that need reproducibility and experiment tracking, this feature eliminates the need for external data versioning tools.

Limitations

Per-user pricing can become expensive for large teams. NLP and text annotation support is more limited than Labelbox or SuperAnnotate. Some reviewers flag gaps in export format flexibility and file handling for certain workflows. 3D and LiDAR support is less developed than Encord or Scale AI.

Best for: Research teams and life sciences organizations that want tight model-training integration and strong medical imaging support. Teams that value auto-annotation workflows and dataset versioning.

SuperAnnotate: The Computer Vision QA Specialist

SuperAnnotate review discussions consistently highlight two themes: exceptional quality assurance tooling and deep computer vision capabilities with fine-grained control over annotation workflows.

Core strength

Quality assurance and customization. SuperAnnotate provides multi-level QA workflows with configurable review stages, annotation consensus, and performance analytics. The platform’s flexibility in customizing annotation interfaces tailoring the editor to show exactly the tools and information each annotator needs reduces errors and improves efficiency on complex projects.

Computer vision depth

The platform supports images, video, LiDAR, and 3D point clouds with a comprehensive set of annotation tools: bounding boxes, polygons, polylines, keypoints, cuboids, and semantic segmentation. Vector annotation tools provide sub-pixel precision that some competing platforms lack.

Workforce option

Unlike pure software platforms, SuperAnnotate offers access to managed annotation teams giving enterprises the option to use the platform self-serve or combine it with SuperAnnotate’s workforce for projects where they need to scale quickly without hiring.

Deployment flexibility

Data hosting options include US and EU cloud regions, with options for private cloud or on-premise deployment for organizations with strict data residency requirements. Starting at approximately $62 per user per month with a 14-day free trial, SuperAnnotate is more transparent on entry-level pricing than most competitors.

Limitations

The platform is enterprise-oriented, which means it can be overkill for small teams or simple projects. Initial setup complexity can be high for organizations that need the full customization capability. NLP support exists but is not as deep as dedicated text annotation tools.

Best for: Computer vision teams that need granular QA control, enterprise organizations managing large distributed annotation teams, and projects requiring fine-grained annotation precision with 3D/LiDAR support.

Comparison by Capability

Best Annotation Tool for Images

For pure image annotation, all five platforms are competent. Labelbox and SuperAnnotate offer the broadest annotation tool suites for general computer vision. Encord and V7 lead for medical imaging (DICOM/NIfTI). Scale AI is the choice if you want a managed service to handle image annotation end-to-end. For SAM-based auto-segmentation, Encord has the deepest integration with SAM 2. (For the annotation techniques themselves, see our post on [image annotation methods Post 7].)

Annotation Tool for NLP

Text and NLP annotation is where the platforms diverge most. Labelbox offers the broadest NLP coverage among the five, supporting classification, NER, and relation extraction with its Evaluation Studio adding LLM evaluation capabilities. Scale AI provides NLP annotation through its managed service with specialized workforce teams. Encord supports document annotation and classification. V7 and SuperAnnotate offer text classification and basic NER but are primarily computer vision platforms. Teams with deep NLP needs may want to evaluate dedicated tools like Prodigy or Label Studio alongside these platforms. (For NLP annotation methodology, see our post on [text annotation for NLP Post 8].)

3D/LiDAR Support

For autonomous vehicles and robotics, 3D annotation depth is critical. Scale AI has the deepest autonomous vehicle annotation expertise through its managed service. Encord supports point cloud annotation with sensor fusion capabilities. SuperAnnotate provides 3D cuboids and point cloud tools. Labelbox offers point cloud support. V7 has more limited 3D capabilities.

Geospatial Support

Geospatial annotation remains a niche capability. Labelbox provides the most explicit geospatial data type support among the five. Scale AI handles satellite and aerial imagery through its managed service. The other platforms have limited or no dedicated geospatial tooling teams with significant geospatial needs should evaluate specialized tools alongside these general-purpose platforms. (For geospatial annotation methods, see our post on [satellite and geospatial annotation Post 12].)

AI-Assisted Features

All five platforms now offer AI-assisted annotation, but the implementations differ. Labelbox’s Model-Assisted Labeling connects your own models for pre-labeling. Encord integrates SAM 2, GPT-4o, and custom micro-models for multi-modal assistance. V7 provides in-platform model training for closed-loop auto-annotation. SuperAnnotate offers ML-assisted auto-labeling with vector tools. Scale AI uses internal AI models to pre-label before human review by its managed workforce. (For the full AI-assisted annotation paradigm, see our post on [AI-assisted annotation Post 24].)

MLOps Integration

Labelbox leads in MLOps integration depth, with extensive APIs, SDKs, cloud storage connectors (AWS, GCP, Azure), and export format support. Encord and V7 provide strong API/SDK access with dataset versioning. SuperAnnotate offers API integrations with cloud storage and standard export formats. Scale AI provides API access, though the managed service model means less granular pipeline integration.

Pricing Summary

Transparent pricing remains an industry pain point. SuperAnnotate is the most transparent, starting at ~$62/user/month with a free trial. Labelbox offers a free community edition and Pro tier starting at ~$500/month. V7 uses per-user pricing. Encord and Scale AI require sales conversations for enterprise pricing. All platforms offer custom enterprise agreements for large deployments.

Verdict by Use Case

“I need an end-to-end enterprise platform for multi-modality annotation.” → Labelbox. Its workflow management, MAL integration, and broad modality support make it the safest general-purpose enterprise choice.

“I need high-volume annotation without building an internal team.” → Scale AI. The managed workforce model eliminates annotator hiring, training, and management overhead. Best for AV, LLM alignment, and government projects.

“I work in healthcare or a regulated industry.” → Encord. Native DICOM support, HIPAA compliance, on-premise deployment, and active learning curation make it purpose-built for regulated environments.

“I’m a research team that iterates rapidly between labeling and training.” → V7. In-platform model training, auto-annotation, and dataset versioning create the fastest experiment-to-insight loop.

“I need maximum QA control for complex computer vision.” → SuperAnnotate. Configurable QA workflows, vector precision tools, and flexible annotation interfaces give the finest control over output quality.

“I’m a startup or small team on a budget.” → Consider Label Studio (open-source, free) or CVAT (open-source, free) before committing to commercial platforms. These open-source tools require more engineering setup but eliminate licensing costs. (For how to evaluate annotation workflows from a project management perspective, see our post on [annotation project management and scaling Post 5].)

Frequently Asked Questions

What are the best data annotation tools in 2026?

The five most widely evaluated enterprise platforms are Labelbox, Scale AI, Encord, V7 (Darwin), and SuperAnnotate. Open-source alternatives include Label Studio and CVAT. The best choice depends on your data modality, compliance requirements, team size, and budget.

How does Labelbox compare to Scale AI?

Labelbox is a software platform you bring your own annotators and manage the workflow. Scale AI combines a platform with a managed annotation workforce. Choose Labelbox if you want control and pipeline integration; choose Scale AI if you want end-to-end managed annotation.

What is the best annotation tool for medical images?

Encord and V7 both offer native DICOM and NIfTI support. Encord leads for teams requiring HIPAA compliance and on-premise deployment. V7 is strong for research teams that want integrated model training alongside medical format support.

Which platform has the best AI-assisted annotation features?

Encord leads with SAM 2 and GPT-4o integration. Labelbox’s Model-Assisted Labeling provides the most model-agnostic pre-labeling. V7’s in-platform model training creates the tightest annotation-to-training loop.

How much do data annotation tools cost?

SuperAnnotate starts at ~$62/user/month. Labelbox Pro starts at ~$500/month. V7 uses per-user pricing. Encord and Scale AI require custom enterprise quotes. Open-source tools (CVAT, Label Studio) are free but require engineering setup and maintenance.

Which platform is best for NLP annotation?

Among the five, Labelbox has the broadest NLP coverage. For dedicated NLP annotation, evaluate Prodigy (active learning-focused NLP tool) or Label Studio (open-source with strong text support) alongside enterprise platforms.