The EU AI Act data annotation core framework becomes broadly operational on August 2, 2026 and for annotation teams building training data for high-risk AI systems, compliance is not optional. Article 10 of the Act explicitly names “annotation” and “labelling” as data-preparation operations subject to mandatory governance requirements. Article 14 requires that high-risk systems be designed for effective human oversight. Articles 50 transparency obligations become enforceable on the same date.

For any team producing EU AI Act training data requirements for systems deployed in Europe, these provisions transform annotation from a technical task into a regulated activity with documented obligations, audit expectations, and potential penalties for non-compliance.

This is not a theoretical concern. The EU AI Act applies to any provider placing a high-risk AI system on the European market or putting one into service in the EU regardless of where the provider or the annotation team is located. A data labeling team in the United States, India, or the Philippines producing training data for a medical imaging system marketed in Germany must comply with the Act’s data governance requirements. With most high-risk obligations taking effect in August 2026 and full compliance for medical device AI required by August 2027, the compliance window is closing.

This post translates the EU AI Act’s regulatory requirements into actionable guidance for annotation teams. It covers Article 10 (data governance), Article 14 (human oversight), Article 50 (transparency), data provenance requirements, annotation audit trails, ethical annotation obligations, and a compliance checklist designed for practical implementation.

EU AI Act Overview for Annotation Teams

The EU AI Act uses a risk-based classification system. The Act categorizes AI systems into four risk levels: unacceptable risk (prohibited), high-risk (heavily regulated), limited risk (transparency obligations), or minimal risk (largely unregulated). Annotation compliance obligations fall primarily in the high-risk category. This category covers AI systems in healthcare, critical infrastructure, education, employment, law enforcement, and migration.

The timeline that matters

The Act entered into force on August 1, 2024. Prohibited AI practices and AI literacy obligations applied from February 2, 2025. Governance rules and GPAI model obligations became applicable August 2, 2025. The core framework for high-risk AI systems including Articles 10, 14, and 50 applies from August 2, 2026. High-risk systems embedded in regulated products (like medical devices under the EU MDR) have an extended transition until August 2, 2027.

Why annotation teams specifically need to care

Article 10(2)(c) of the Act explicitly identifies “annotation, labelling, cleaning, updating, enrichment and aggregation” as data-preparation operations subject to governance requirements. This is not a general reference to data quality it is a specific regulatory obligation that applies to the annotation process itself. Annotation teams are now directly within the scope of regulatory compliance, not indirectly through their clients.

Penalties

Non-compliance with high-risk system requirements can result in fines of up to €15 million or 3% of global annual turnover, whichever is higher. For prohibited AI practices, fines can reach €35 million or 7% of turnover. These numbers make compliance a board-level priority, not just an operational consideration. (For foundational annotation principles, see our [comprehensive guide to data annotation Pillar Page].)

Article 10: Data Governance Annotation’s Regulatory Foundation

Article 10 of the EU AI Act establishes the data governance requirements that directly regulate how training, validation, and testing datasets are produced. For annotation teams, this article is the most operationally significant provision in the entire Act.

What Article 10 requires

Training, validation, and testing datasets must be subject to governance and management practices that address seven specific areas. Each translates into concrete annotation obligations:

Data collection and origin (Art. 10(2)(b))

Teams must document where the data came from, how it was collected, and for personal data the original purpose of collection. For annotation teams, this means maintaining records of data sources, collection methods, and any consent or licensing agreements governing the data.

Annotation and labeling processes (Art. 10(2)(c))

The Act requires documentation of “relevant data-preparation processing operations, such as annotation, labelling, cleaning, updating, enrichment and aggregation.” This is the provision that places annotation squarely within regulatory scope. Teams must document their annotation guidelines, the methods used (manual, AI-assisted, programmatic), the tools employed, and the quality controls applied.

Assumptions and representativeness (Art. 10(2)(d), 10(3))

Datasets must be “relevant, sufficiently representative, and to the best extent possible, free of errors and complete.” They must have “appropriate statistical properties” including with respect to the persons or groups of persons the system serves. For annotation, this means teams must document and justify label schemas. The annotated dataset must represent the intended deployment context accurately. Teams must also make explicit any assumptions they embed in the labeling guidelines.

Bias examination and mitigation (Art. 10(2)(f), 10(2)(g))

Teams must examine datasets for “possible biases that are likely to affect the health and safety of persons, have a negative impact on fundamental rights or lead to discrimination prohibited under Union law.” They must also implement “appropriate measures to detect, prevent, and mitigate possible identified biases.” For annotation, this means conducting bias audits on labeled data, documenting any identified biases, and implementing corrective measures whether through re-annotation, guideline revision, or targeted data collection.

Data gap identification (Art. 10(2)(h))

Teams must identify “relevant data gaps or shortcomings that prevent compliance” and document strategies for addressing them. If a dataset under-represents a demographic group, geographic region, or edge case category relevant to the intended deployment, the annotation team must document the gap and the plan to fill it.

Article 14: Human Oversight What It Means for Annotation

EU AI Act Article 14 requires providers to design and develop high-risk AI systems so that natural persons can effectively oversee them during use. This includes providing appropriate human-machine interface tools to support that oversight. For annotation teams, this provision has both direct and indirect implications.

Direct implications for annotation

Human oversight of an AI system depends on the quality of the training data that shaped its behavior. If a team produces training data without adequate human review for instance, through fully automated labeling with no human verification the system may develop errors that no amount of deployment-time oversight can correct. Article 14 creates an implicit requirement that the annotation process itself includes adequate human oversight: human-in-the-loop review, quality assurance, and error correction protocols that ensure the training data is trustworthy.

The automation bias provision

Article 14(4)(b) specifically requires that human overseers “remain aware of the possible tendency of automatically relying or over-relying on the output produced by a high-risk AI system (automation bias).” For annotation teams using AI-assisted pre-labeling, this provision is directly relevant. If annotators are reviewing AI-generated pre-labels, they must be trained and supported to genuinely evaluate and correct those labels rather than passively accepting them. The annotation workflow must be designed to prevent the automation bias that Article 14 identifies as a risk.

Documentation requirements

Teams must document the human oversight measures built into the annotation process: who reviews the labels, what qualifications they hold, what authority they have to override automated predictions, and how disagreements between human annotators or between human annotators and AI pre-labels are resolved. These records become part of the technical documentation required under Article 11. (For how human-in-the-loop workflows address these requirements, see our post on [human-in-the-loop annotation Post 25].)

Article 50: Transparency and Documentation

Article 50 of the EU AI Act establishes transparency obligations for providers and deployers of certain AI systems. While Article 50 primarily addresses end-user transparency (disclosing that content was AI-generated, marking deepfakes, informing users they are interacting with AI), its requirements have upstream implications for annotation teams.

Transparency of training data

For general-purpose AI models, Articles 53 and 55 require transparency about training data including a summary of the content used for model training. The European Commission has published templates for this training content summary. Annotation teams producing training data for GPAI models must be prepared to contribute information about the annotated datasets: what data was labeled, by whom, using what methods, and with what quality controls.

The Code of Practice

The European Commission is developing a Code of Practice on transparent generative AI systems, expected to be finalized by June 2026, that will provide practical implementation guidance for Article 50 obligations. Annotation teams should monitor this Code’s development, as it may introduce specific documentation requirements for how training data (including annotations) is described and disclosed.

Enforcement date

Article 50’s transparency obligations become enforceable on August 2, 2026 the same date as the high-risk system requirements. Teams that have not implemented transparency documentation by this date face compliance risk.

Data Provenance AI Compliance: Tracing Every Label

Data provenance AI compliance the documented chain of custody from raw data through annotation to model training is a cross-cutting requirement that flows from Articles 10, 11, 12, and 18 of the EU AI Act. For annotation teams, provenance is not a nice-to-have; it is the mechanism that makes compliance demonstrable.

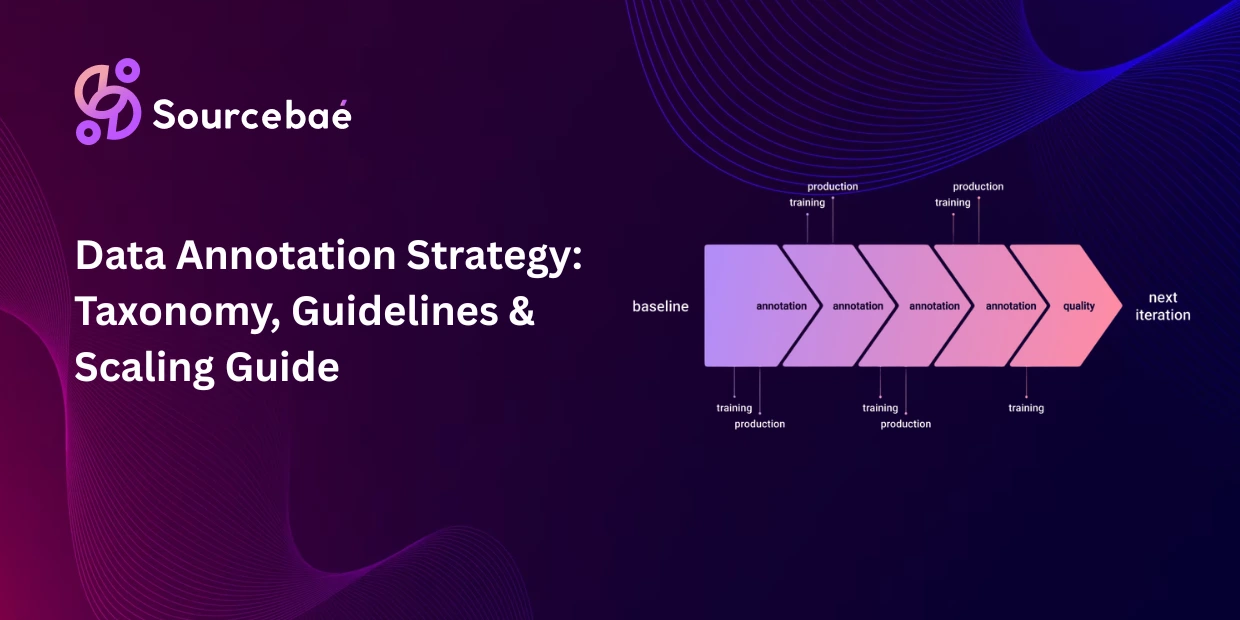

What provenance means for annotation

Every label in a compliant dataset must be traceable to its origin. The provenance record should include the identity of the raw data source (where the data came from and under what terms), the identity of the annotator (who produced the label individual, team, or automated system), the annotation method (manual, AI-assisted, programmatic, or LLM-generated), the guideline version (which version of the annotation guidelines was in effect when the label was produced), the timestamp (when the label was created and, if applicable, when it was reviewed or corrected), the review chain (who reviewed the label, what the review outcome was, and whether any corrections were made), and the tool and model versions (which annotation platform, which pre-labeling model, which software version was used).

Why provenance matters for compliance

When a regulator, auditor, or conformity assessment body examines a high-risk AI system, they need to trace from the system’s behavior back through the model to the training data to the annotation process. If the annotation team cannot produce this chain of documentation, the system fails its conformity assessment regardless of how well the model performs.

Practical implementation

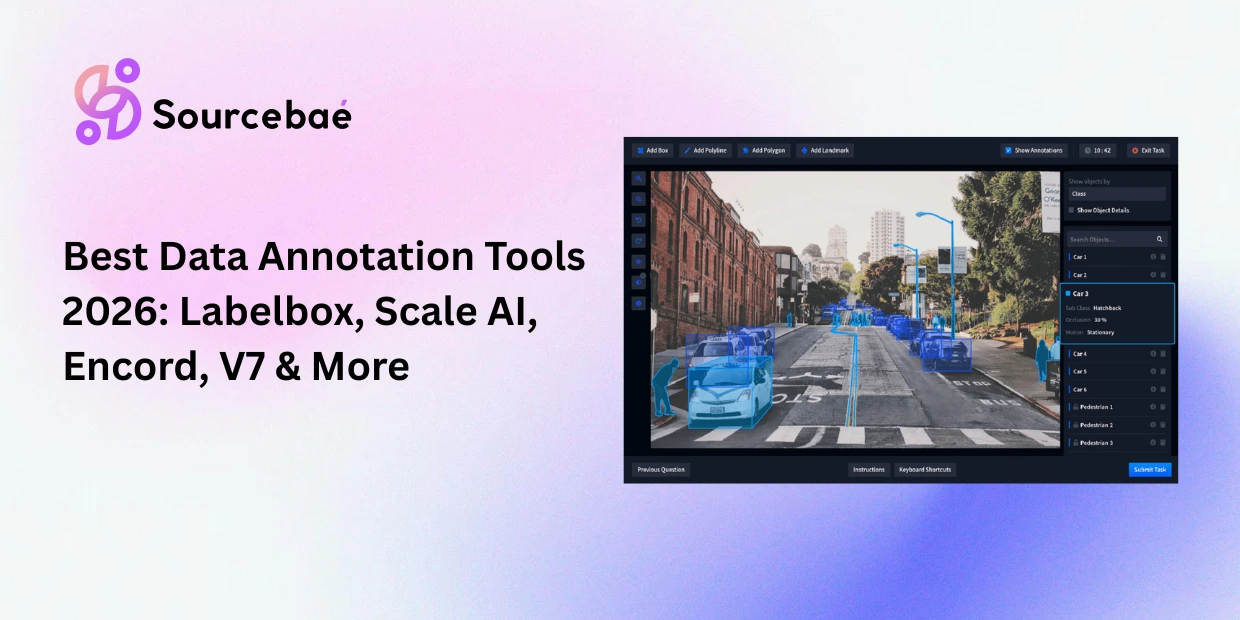

Provenance tracking does not require exotic tooling. It requires disciplined metadata management: every label carries a metadata object recording its provenance, and the annotation platform maintains an immutable log of all annotation activities. Most enterprise annotation platforms (Labelbox, Encord, SuperAnnotate) support audit logging and metadata tracking. The key is configuring these features before annotation begins, not retrofitting provenance after the dataset is complete.

Annotation Audit Trail Requirements

An annotation audit trail is the complete, chronological record of every action taken on a dataset during the annotation process. Article 12 of the EU AI Act requires automatic event-logging for high-risk systems, and Article 18 requires documentation keeping. Together, these provisions create a requirement for annotation teams to maintain comprehensive audit trails.

What the audit trail must capture

At minimum, a compliant audit trail records every label creation, modification, and deletion (who changed what, when, and why), every review and approval decision (who approved the label, what criteria they applied), every guideline version change (what was changed, when, and how it affected existing labels), every quality metric measurement (inter-annotator agreement scores, accuracy against gold standards, error rates), and every data access event (who accessed the dataset, when, and for what purpose).

Immutability and retention

Audit trail records should be immutable meaning they cannot be altered or deleted af ter creation and retained for the duration of the AI system’s lifecycle plus any applicable regulatory retention periods. This is a technical requirement that annotation platforms must support: append-only logging with tamper-evident storage. (For how annotation project management connects to audit trail design, see our post on [annotation project management and scaling Post 6].)

Ethical Data Annotation: Fair Pay, Diverse Workforce, and Bias Auditing

Ethical data annotation is not a separate compliance category it is woven throughout the EU AI Act’s requirements and reflects broader expectations about responsible AI development. Three dimensions are particularly relevant for annotation teams preparing for August 2026.

Fair compensation and working conditions

While the EU AI Act does not directly regulate annotator pay, the Act’s emphasis on data quality and human oversight creates an indirect economic argument for fair compensation. Annotators who are underpaid, overworked, or operating under exploitative conditions produce lower-quality labels. The resulting training data degrades system performance and may introduce the very biases that Article 10 requires teams to prevent. Organizations citing the EU AI Act’s quality requirements while relying on underpaid labor face both an ethical contradiction and a practical risk.

Workforce diversity as a bias mitigation strategy

Article 10(2)(f)’s requirement to examine and mitigate bias is difficult to fulfill with a homogeneous annotation workforce. If all annotators share the same cultural background, language patterns, and demographic perspective, systematic biases in their labeling will go undetected because no one on the team has a different perspective. A diverse annotation workforce in terms of geography, language, gender, age, and domain background is a practical bias mitigation measure that supports Article 10 compliance.

Structured bias auditing

Compliance requires more than a statement that “we checked for bias.” It requires a documented bias auditing process. Teams must define which biases are relevant to the intended purpose, such as demographic under-representation, linguistic bias, and cultural interpretation bias. They must then measure those biases quantitatively in the annotated dataset. Where they identify biases, teams must implement corrective actions. After correction, they must re-measure to verify the mitigation worked effectively. This audit should recur regularly, not happen just once. Biases can enter at any stage of annotation and may evolve as guidelines change.

Compliance Checklist for Annotation Teams

The following checklist translates the EU AI Act’s requirements into actionable items for annotation teams. Each item maps to specific regulatory provisions.

Data Governance (Article 10)

- Document all data sources, collection methods, and licensing/consent terms for every dataset entering annotation.

- Maintain versioned annotation guidelines with change logs showing what was updated and when.

- Document the annotation methodology for each project: manual, AI-assisted, programmatic, or hybrid including the specific tools and models used.

- Assess dataset representativeness against the intended deployment population and document any gaps.

- Conduct bias audits on annotated datasets, document findings, and implement corrective measures.

- Maintain records of data preparation operations including annotation, cleaning, and enrichment.

Human Oversight (Article 14)

- Ensure human-in-the-loop review is embedded in annotation workflows, especially where AI-assisted pre-labeling is used.

- Train annotators to recognize and resist automation bias when reviewing AI-generated pre-labels.

- Document annotator qualifications, training records, and domain expertise.

- Establish and document escalation protocols for ambiguous or contested labels.

- Record all human override decisions and the reasoning behind them.

Transparency (Articles 13, 50, 53)

- Prepare training data summaries describing dataset composition, annotation methods, and quality metrics.

- Document any synthetic data used in the training set, including generation methods and validation results.

- Be prepared to disclose annotation provenance to downstream deployers and, if required, to regulatory authorities.

Audit Trails (Articles 12, 18)

- Configure annotation platforms to capture immutable, timestamped records of all annotation activities.

- Maintain records of inter-annotator agreement scores, quality metrics, and review outcomes.

- Retain audit trail data for the AI system’s full lifecycle plus applicable retention periods.

- Ensure audit trail records are accessible for conformity assessments and regulatory inquiries.

Ethical Obligations

- Compensate annotators fairly and ensure working conditions support sustained quality.

- Build diverse annotation teams to support bias detection and mitigation.

- Conduct recurring bias audits not one-time checks throughout the annotation lifecycle.

- Document all ethical measures as part of the quality management system required under Article 17.

What Happens If You Don’t Comply

Non-compliance is not hypothetical. The EU has established national market surveillance authorities to enforce the AI Act, and the European AI Office coordinates oversight for GPAI models. Spain’s AESIA has already published 16 guidance documents and practical compliance templates. Conformity assessment procedures (Article 43) require documented evidence of compliance with Articles 10 and 14 before a high-risk system can be placed on the market.

For annotation teams, the practical consequence of non-compliance is that the AI system they produced training data for cannot pass its conformity assessment. The provider cannot market the system in Europe. The training data must be re-examined, re-documented, or re-annotated at significant additional cost and delay. Investing in compliance from the start of the annotation process is dramatically cheaper than retrofitting it after the data has been produced. (For how medical imaging annotation intersects with EU MDR and AI Act compliance, see our post on [medical imaging annotation Post 23].)

Frequently Asked Questions

How does the EU AI Act affect data annotation?

Article 10 explicitly names “annotation” and “labelling” as data-preparation operations subject to mandatory governance requirements for high-risk AI systems. Annotation teams must document their processes, conduct bias audits, ensure representativeness, and maintain audit trails.

What does EU AI Act Article 14 require for annotation?

Article 14 requires that high-risk AI systems enable effective human oversight. For annotation, this means embedding human review in labeling workflows, training annotators to resist automation bias when reviewing AI pre-labels, and documenting all human oversight measures.

What are the EU AI Act’s training data requirements?

Article 10 requires that training data be relevant, sufficiently representative, and free of errors. It mandates documentation of data sources, annotation methods, bias examination, and gap identification. Datasets must have appropriate statistical properties for the intended deployment population.

What is data provenance in AI compliance?

Data provenance is the documented chain of custody from raw data through annotation to model training. It includes data source, annotator identity, annotation method, guideline version, timestamps, review chain, and tool versions making compliance demonstrable to regulators.

What is an annotation audit trail?

An annotation audit trail is the complete, immutable record of every action taken during the annotation process: label creation, modification, review, approval, guideline changes, quality measurements, and data access events.

When do EU AI Act annotation requirements take effect?

The core framework for high-risk AI systems, including Articles 10, 14, and 50, applies from August 2, 2026. High-risk systems embedded in regulated products (like medical devices) have until August 2, 2027.

What are the penalties for non-compliance?

Fines can reach €15 million or 3% of global annual turnover for high-risk system violations, and €35 million or 7% of turnover for prohibited AI practices.