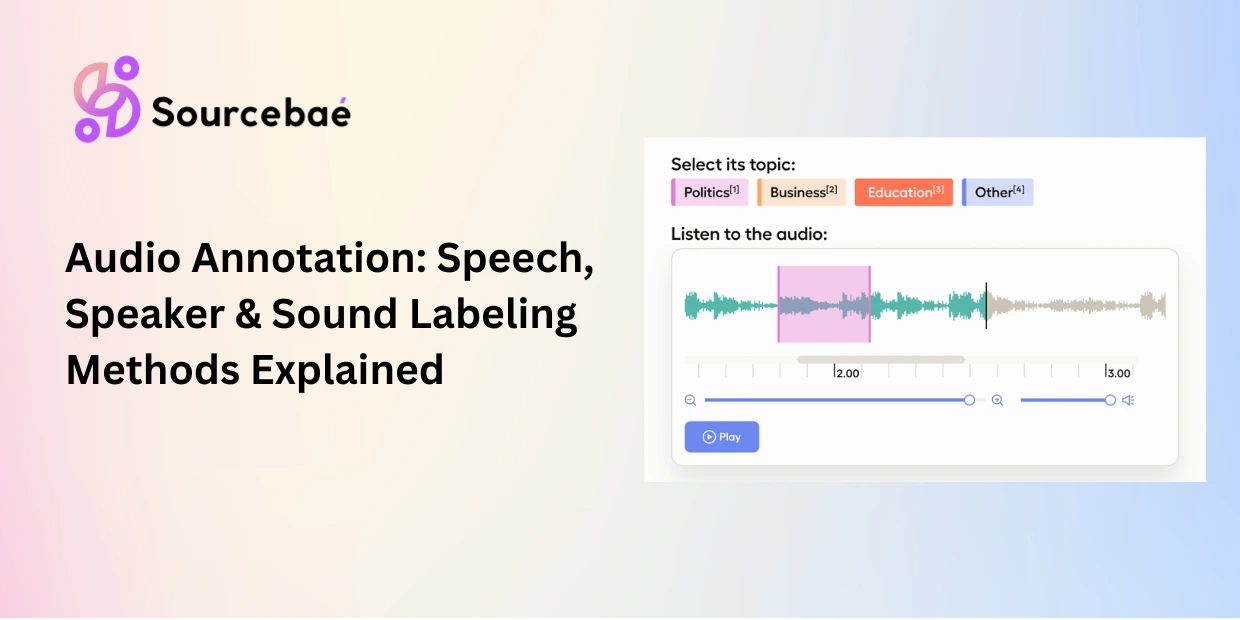

Audio annotation is the process of adding structured labels transcriptions, speaker tags, timestamps, emotion markers, and sound event classifications to raw audio data so that machine learning models can learn to transcribe speech, identify speakers, detect emotions, and classify sounds.

Unlike image or text annotation, audio annotation operates in the temporal dimension: annotators work with waveforms, spectrograms, and playback timelines rather than pixels or text spans. This makes it a distinct discipline that requires different tools, different skills, and different quality benchmarks. Speech annotation for a voice assistant demands native-language fluency and phonetic awareness. Audio data labeling for a security system requires the ability to distinguish a glass-breaking sound from a door slam at 2 a.m.

The demand for audio labeling for AI is accelerating as voice interfaces, transcription services, call center analytics, and speech-to-text systems become embedded in everyday workflows. OpenAI’s Whisper model demonstrated that large-scale voice annotation for speech recognition can dramatically improve ASR accuracy, but even the best models require human-annotated data for domain-specific fine-tuning, quality validation, and edge case handling.

This guide covers every major audio annotation method used in production speech and sound AI, including when to use each technique, the quality metrics that matter, and the challenges teams encounter when labeling audio at scale.

Speech-to-Text Annotation: The Foundation of ASR

Speech-to-text annotation (also called transcription annotation) is the process of converting spoken language into written text with precise timestamps, creating the ground truth data that automatic speech recognition (ASR) models learn from. It is the most fundamental form of speech annotation and the highest-volume audio labeling task in the industry.

How it works

An annotator listens to an audio recording and produces a verbatim text transcript. Each word or phrase is aligned to its corresponding timestamp in the audio, typically at the word level or segment level. The output is a time-aligned transcript that maps spoken language to written text with millisecond precision.

Speech-to-text annotation quality is measured primarily by Word Error Rate (WER) the percentage of words in the transcript that differ from the reference. Production ASR systems target WER below 5% for clean speech and below 15% for noisy or accented speech. The quality of the ground truth annotations directly determines this ceiling: if your training transcripts contain errors, your model inherits them.

Use cases

Speech-to-text annotation powers voice assistants (Alexa, Siri, Google Assistant), meeting transcription platforms (Otter, Fireflies), medical dictation systems, legal transcription services, subtitle and caption generation, and accessibility tools for hearing-impaired users. Every time you dictate a message or read auto-generated subtitles, you are interacting with a model trained on human-annotated voice annotation for speech recognition data.

Common pitfalls

Inconsistent transcription conventions create noisy training data. Does “can’t” get transcribed as “can’t” or “cannot”? Are filler words (“um,” “uh,” “like”) included or omitted? Are numbers written as digits or spelled out? Detailed transcription guidelines covering these conventions are essential and they must be established before annotation begins.

Background noise and overlapping speech are the biggest practical challenges in speech-to-text annotation. A call center recording with hold music, a meeting with three people talking simultaneously, or a street-level recording with traffic noise all require annotators to parse signal from noise. For noisy audio, source separation preprocessing can isolate speech channels before human annotation begins.

Accent and dialect variation causes transcription errors when annotators are unfamiliar with the speaker’s linguistic background. Best practice: match annotators to the language and dialect of the audio they are transcribing. A British English annotator will transcribe American English reliably, but may struggle with Singaporean English or Nigerian English without specific training.

Speaker Diarization Annotation: Identifying Who Spoke When

Speaker diarization annotation is the process of segmenting an audio recording by speaker labeling which person is speaking at every point in the recording. It answers the question “who spoke when?” and transforms a single audio stream into a structured, speaker-attributed transcript.

How it works

The annotator listens to a multi-speaker recording and marks the start and end timestamps for each speaker’s turn. Each segment is tagged with a speaker identifier (Speaker A, Speaker B, or when known the speaker’s name or role: “Doctor,” “Patient,” “Agent,” “Customer”). The output is a timeline of speaker turns that can be merged with a transcription to produce a fully attributed transcript.

Speaker diarization annotation accuracy is measured by Diarization Error Rate (DER) the percentage of audio time that is incorrectly attributed to the wrong speaker, missed entirely, or falsely detected as speech. State-of-the-art diarization models like Pyannote 3.1 achieve DER of 11–19% on standard benchmarks, but domain-specific fine-tuning with high-quality speaker diarization annotation data can push this significantly lower.

Use cases

Speaker diarization annotation is essential for call center analytics (separating agent and customer speech for quality monitoring), meeting transcription (attributing comments to specific participants), podcast processing (identifying host and guest), telemedicine documentation (creating speaker-attributed clinical records research shows patient history contributes to 76% of initial diagnoses), legal depositions (tracking who said what under oath), and media monitoring (attributing quotes to specific public figures).

Common pitfalls

Overlapping speech is the core challenge in speaker diarization annotation. When two people talk simultaneously, the annotator must decide how to segment the overlap assign it to the dominant speaker, split it, or mark it as a simultaneous segment. Guidelines must define the overlap handling policy before annotation begins.

Speaker re-identification across long recordings creates inconsistency. In a 90-minute meeting, Speaker B may go silent for 30 minutes and then speak again. Annotators must correctly link the resumed speech to the same speaker identity, which requires careful attention and sometimes re-listening to earlier segments for voice matching.

Unknown speaker count adds difficulty. In some recordings, the number of speakers is not known in advance. Annotators must detect new speakers as they appear and create new identifiers dynamically. This is significantly harder than annotating recordings where the speaker count is pre-defined.

Emotion and Sentiment Detection in Audio

Emotion annotation in audio classifies the speaker’s emotional state based on vocal characteristics tone, pitch, speaking rate, volume, and prosodic patterns. Unlike text-based sentiment annotation (which labels written words), audio emotion detection captures how something is said, not just what is said.

How it works

Annotators listen to speech segments and assign emotion labels from a predefined taxonomy. Common schemas use categorical emotions (happy, sad, angry, fearful, neutral, surprised) or dimensional models (valence-arousal scales ranging from negative to positive and calm to excited). Some projects combine both: a categorical label plus a valence-arousal score for each segment.

Use cases

Call center quality monitoring (detecting frustrated customers in real time), mental health screening (identifying vocal markers of depression or anxiety), in-vehicle safety systems (detecting driver drowsiness or aggression), customer experience analytics, and media content analysis.

Common pitfalls

Subjectivity is highest in emotion annotation. Two annotators listening to the same segment may legitimately disagree on whether the speaker sounds “frustrated” or “impatient.” Multi-annotator consensus with at least three annotators per segment is essential. Target Cohen’s Kappa above 0.7 for categorical emotion labels lower than the 0.8 threshold for most other annotation types because of inherent subjectivity.

Cultural variation in emotional expression affects annotation consistency. Vocal expressions of politeness, anger, or enthusiasm differ across cultures. Annotators should be culturally matched to the speakers in the audio whenever possible.

Sound Event Labeling: Beyond Speech

Sound event labeling (also called audio event detection annotation) classifies non-speech audio events environmental sounds, mechanical noises, alarms, and other acoustic signals. This audio annotation type trains models that must understand sound environments beyond human speech.

How it works

Annotators listen to audio recordings and tag each identifiable sound event with a class label and start/end timestamps. Events might include glass breaking, a door closing, a dog barking, a siren, machinery operating, gunshots, footsteps, or vehicle engines. The annotation schema defines the event taxonomy, and annotators mark every occurrence within the recording.

Use cases

Security and surveillance systems (detecting gunshots, glass breaking, screams), smart home devices (recognizing doorbells, smoke alarms, baby crying), industrial monitoring (identifying machinery malfunction sounds), wildlife research (classifying bird calls, whale songs, insect patterns), and autonomous vehicles (detecting emergency sirens, honking).

Common pitfalls

Simultaneous events require multi-label annotation a recording may contain traffic noise, a siren, and a honking horn all at once. Your schema must support overlapping event labels with independent timestamps.

Event boundary ambiguity makes consistent annotation difficult. When exactly does a “door slam” start and end? Does the reverb count? Defining precise onset/offset rules in guidelines prevents inconsistent timestamp placement across annotators.

Acoustic Scene Classification

Acoustic scene classification labels the overall sound environment of a recording rather than individual events within it. Instead of tagging specific sounds, annotators classify the recording’s setting: indoor office, outdoor street, restaurant, train station, park, airport terminal, or shopping mall.

This audio data labeling technique trains context-aware AI systems that adapt behavior based on the acoustic environment. A hearing aid that detects a noisy restaurant and adjusts its noise suppression profile. A smartphone that recognizes a meeting room and automatically silences notifications. A security system that establishes a “normal” acoustic baseline for a location and flags deviations.

Multilingual and Accent Challenges in Audio Annotation

Scaling audio annotation across languages introduces complexity that monolingual projects do not face.

Multilingual speech-to-text annotation requires annotators who are native speakers of the target language not just fluent, but natively familiar with colloquialisms, regional dialects, and code-switching patterns. A Spanish transcription annotator from Mexico City will produce different transcripts than one from Buenos Aires, and both are correct for their respective dialects.

Code-switching when speakers alternate between two or more languages within a single conversation is particularly challenging for speech annotation. “I’ll send you the informe by Friday, ¿vale?” requires the annotator to transcribe both languages accurately and the transcription convention to define how mixed-language segments are handled.

Low-resource languages have limited pre-trained models and fewer available annotators, making audio labeling for AI significantly more expensive and time-consuming. For these languages, audio annotation projects often begin with small pilot datasets, use transfer learning from higher-resource languages, and rely on specialized annotation partners with access to native-speaker annotator pools.

AI-Assisted Audio Annotation in 2026

Modern audio annotation pipelines increasingly combine automated models with human review. OpenAI’s Whisper and its successors can generate draft transcriptions in 50+ languages, providing a pre-labeled starting point that human annotators correct rather than create from scratch. This model-in-the-loop approach reduces audio annotation time by 40–60% for transcription tasks.

For speaker diarization annotation, tools like Pyannote 3.1 and NVIDIA NeMo generate initial speaker segmentations that humans verify and correct. For sound event detection, pre-trained audio classification models flag candidate events that annotators confirm or reject.

However, human oversight remains essential. Automated models fail systematically on domain-specific vocabulary (medical terminology, legal jargon, proprietary product names), heavily accented speech, noisy environments, and overlapping speakers. Audio labeling for AI in 2026 is a human-AI collaboration not full automation.

Choosing the Right Audio Annotation Method

Selecting the correct audio data labeling approach depends on what your model needs to learn from sound.

Choose speech-to-text annotation when your model must convert spoken language to written text for ASR systems, transcription services, subtitle generation, or voice command interfaces. This is the most common voice annotation for speech recognition task.

Choose speaker diarization annotation when your model must identify individual speakers in multi-speaker recordings for call center analytics, meeting transcription, legal proceedings, or medical documentation.

Choose emotion detection annotation when your model must assess the speaker’s emotional state from vocal characteristics for customer experience monitoring, mental health screening, or in-vehicle safety systems.

Select sound event labeling when your model must detect and classify non-speech sounds for security systems, smart home devices, industrial monitoring, or wildlife research.

Select acoustic scene classification when your model must understand the overall sound environment for context-aware devices that adapt behavior based on location type.

Combine methods when your application requires multi-layer audio understanding. A call center AI might need speech-to-text annotation (to transcribe the conversation), speaker diarization annotation (to separate agent and customer), and emotion detection (to flag frustrated callers) all applied to the same recording. Multi-layer audio annotation produces the richest training signals but requires careful coordination across annotation passes.

Frequently Asked Questions

What is audio annotation?

Audio annotation is the process of adding structured labels to raw sound data transcriptions, speaker tags, timestamps, emotion markers, and sound event classifications so that machine learning models can learn to transcribe speech, identify speakers, detect emotions, and classify sounds. It is a distinct discipline from image or text annotation because it operates in the temporal dimension, requiring annotators to work with waveforms, spectrograms, and playback timelines.

What is speech-to-text annotation used for?

Speech-to-text annotation creates the ground truth transcriptions that automatic speech recognition (ASR) models learn from. It powers voice assistants, meeting transcription platforms, medical dictation systems, subtitle generation, and accessibility tools. Quality is measured by Word Error Rate (WER), with production systems targeting below 5% for clean speech. Voice annotation for speech recognition requires native-language fluency, familiarity with domain vocabulary, and strict adherence to transcription conventions.

What is speaker diarization annotation?

Speaker diarization annotation segments audio recordings by speaker, labeling who spoke when throughout the recording. It produces speaker-attributed transcripts essential for call center analytics, meeting transcription, telemedicine documentation, and legal proceedings. Accuracy is measured by Diarization Error Rate (DER). State-of-the-art models like Pyannote 3.1 achieve DER of 11–19% on standard benchmarks, but domain-specific fine-tuning with high-quality speaker diarization annotation data can improve this significantly.

How is audio data labeling different from text annotation?

Audio data labeling operates in the temporal dimension annotators work with sound waves, timestamps, and playback rather than written words. Audio introduces unique challenges: background noise, overlapping speech, accent variation, and the need for real-time temporal alignment. Text annotation labels static content. Audio annotation labels dynamic, time-dependent signals where the same word can carry different meaning depending on tone, speed, and emphasis.

What tools are used for audio labeling for AI?

Leading platforms for audio labeling for AI in 2026 include Label Studio (open-source, supports audio waveform annotation with timestamps), Labelbox (enterprise-grade with multi-modal support), SuperAnnotate (AI-assisted audio workflows), and Audacity (open-source for basic segmentation and labeling). For automated pre-labeling, OpenAI Whisper handles transcription, Pyannote 3.1 handles speaker diarization, and NVIDIA NeMo provides end-to-end ASR with diarization. Most production teams use a combination: automated pre-labeling followed by human verification and correction.

How much does audio annotation cost?

Costs for audio annotation depend on the task, audio quality, and language. Basic speech transcription in English costs approximately $1–$3 per audio minute for outsourced work. Speaker diarization annotation adds $0.50–$1.50 per minute on top of transcription. Emotion annotation ranges from $2–$5 per minute due to higher subjectivity and multi-annotator requirements. Multilingual and low-resource language speech annotation can cost 2–3x more than English due to limited annotator availability. AI-assisted pre-labeling reduces these costs by 40–60%.

What quality metrics matter for audio annotation?

The key quality metrics for audio annotation are Word Error Rate (WER) for transcription accuracy (target: below 5% for clean speech), Diarization Error Rate (DER) for speaker attribution accuracy, Cohen’s Kappa for inter-annotator agreement on categorical tasks like emotion detection (target: above 0.7), and timestamp precision for temporal alignment (typically measured in milliseconds). For production audio data labeling, implement multi-pass review workflows: automated pre-labeling → human annotation → secondary review → spot-check audit.